Difference between revisions of "Kaustubh Shivdikar"

m (Lady bug test) (Tag: Visual edit) |

m (GNN Mark added) (Tag: Visual edit) |

||

| Line 86: | Line 86: | ||

|} | |} | ||

| − | *'''GNNMark: A benchmark suite to characterize graph neural network training on GPUs''' ([https://ispass.org/ispass2021/ ISPASS 2021]) | + | *<br />[[File:Mini GNN.png|right|frameless|67x67px]]'''GNNMark: A benchmark suite to characterize graph neural network training on GPUs''' ([https://ispass.org/ispass2021/ ISPASS 2021]) [PDF] |

| + | **[https://www.linkedin.com/in/trinayan-baruah-30/ Trinayan Baruah], '''[[Kaustubh Shivdikar]]''', [https://www.linkedin.com/in/shi-dong-neu/ Shi Dong], [https://syifan.github.io/ Yifan Sun], [https://www.linkedin.com/in/saiful-mojumder/ Saiful A Mojumder], [https://scholar.google.com/citations?user=do5tcEAAAAAJ&hl=en Kihoon Jung], [https://sites.google.com/ucam.edu/jlabellan/ José L. Abellán], [https://www.linkedin.com/in/ukidaveyash/ Yash Ukidave], [https://www.bu.edu/eng/profile/ajay-joshi/ Ajay Joshi], [http://icn.kaist.ac.kr/~jjk12/ John Kim], [https://ece.northeastern.edu/fac-ece/kaeli.html David Kaeli] | ||

| + | |||

| + | <br /> | ||

| + | {| class="wikitable mw-collapsible mw-collapsed" | ||

| + | |+Abstract | ||

| + | !Abstract | ||

| + | |- | ||

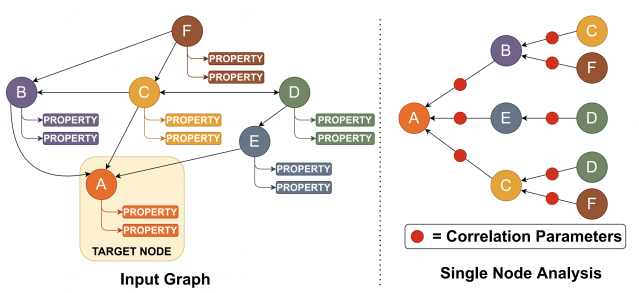

| + | |Graph Neural Networks (GNNs) have emerged as a promising class of Machine Learning algorithms to train on non-euclidean data. GNNs are widely used in recommender systems, drug discovery, text understanding, and traffic forecasting. Due to the energy efficiency and high-performance capabilities of GPUs, GPUs are a natural choice for accelerating the training of GNNs. Thus, we want to better understand the architectural and system level implications of training GNNs on GPUs. Presently, there is no benchmark suite available designed to study GNN training workloads. | ||

| + | |||

| + | |||

| + | In this work, we address this need by presenting GNNMark, a feature-rich benchmark suite that covers the diversity present in GNN training workloads, datasets, and GNN frameworks. Our benchmark suite consists of GNN workloads that utilize a variety of different graph-based data structures, including homogeneous graphs, dynamic graphs, and heterogeneous graphs commonly used in a number of application domains that we mentioned above. We use this benchmark suite to explore and characterize GNN training behavior on GPUs. We study a variety of aspects of GNN execution, including both compute and memory behavior, highlighting major bottlenecks observed during GNN training. At the system level, we study various aspects, including the scalability of training GNNs across a multi-GPU system, as well as the sparsity of data, encountered during training. The insights derived from our work can be leveraged by both hardware and software developers to improve both the hardware and software performance of GNN training on GPUs. | ||

| + | |- | ||

| + | |[[File:GNN Analysis.png|639x639px]] | ||

| + | Graph Neural Network Analysis | ||

| + | |} | ||

| + | |||

*'''SMASH: Sparse Matrix Atomic Scratchpad Hashing''' ([https://www.proquest.com/docview/2529815748?pq-origsite=gscholar&fromopenview=true MS Thesis, 2021]) | *'''SMASH: Sparse Matrix Atomic Scratchpad Hashing''' ([https://www.proquest.com/docview/2529815748?pq-origsite=gscholar&fromopenview=true MS Thesis, 2021]) | ||

*'''Student cluster competition 2018, team northeastern university: Reproducing performance of a multi-physics simulations of the Tsunamigenic 2004 Sumatra Megathrust earthquake on the AMD EPYC 7551 architecture''' ([https://sc18.supercomputing.org/ SC 2018]) | *'''Student cluster competition 2018, team northeastern university: Reproducing performance of a multi-physics simulations of the Tsunamigenic 2004 Sumatra Megathrust earthquake on the AMD EPYC 7551 architecture''' ([https://sc18.supercomputing.org/ SC 2018]) | ||

Revision as of 01:03, 20 August 2022

I am a Ph.D. candidate studying in NUCAR lab at Northeastern University under the guidance of Dr. David Kaeli. My research focuses on designing hardware accelerators for sparse graph workloads.

My expertise lies in:

- Computer Architecture Simulator Design

- Graph Neural Network Accelerators

- Sparse Matrix Accelerators

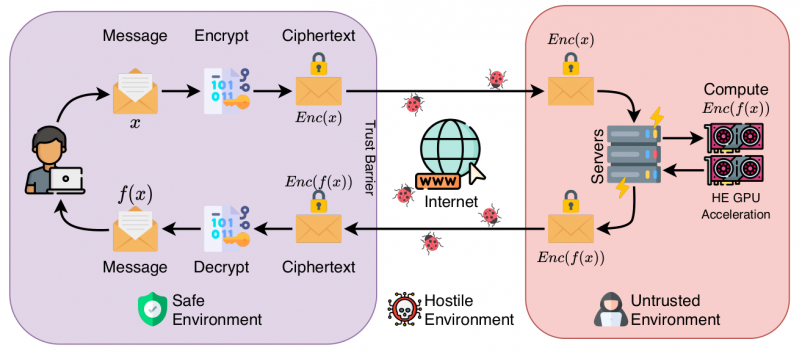

- Homomorphic Encryption Accelerators

- GPU Kernel Design

Contact: shivdikar.k [at] northeastern [dot] edu, mail [at] kaustubh [dot] us

Education

- PhD - Compuer Engineering, Northeastern University [Expected Fall 2022]

- MS - Electrical and Computer Engineering, Northeastern University [May 2021]

- BS - Electrical Engineering, Veermata Jijabai Technological Institute [May 2016]

Work

- Summer-Fall 2020 Coop: Parallel Computing Lab @ Intel Labs with Fabrizio Petrini.

- Summer-Fall 2019 Coop: Parallel Computing Lab @ Intel Labs with Fabrizio Petrini.

- Summer-Fall 2018 Coop: Mobile Robotics @ Omron Adept with George Paul.

Recent News

- June 2022: Mentored Lina Adkins for the GNN Acceleration project at REU-Pathways program

- May 2022: Served as Submission chair for HPCA 2023 conference.

- Jan 2020: Taught the GPU Programming Course at NEU

- April 2019: Graduate Innovator Award at the RISE 2019 Research Expo for our poster Pi-Tiles

- April 2018: Best Poster Award at the RISE 2018 Research Expo for our poster The Prime Hexagon

- Nov 2018: Mentored the NEU team for Student Cluster Contest at Super Computing Conference 2018

- Nov 2017: Joined the NEU Team for Student Cluster Contest at Super Computing Conference 2017

Publications

- Accelerating Polynomial Multiplication for Homomorphic Encryption on GPUs (SEED 2022) [PDF]

GNNMark: A benchmark suite to characterize graph neural network training on GPUs (ISPASS 2021) [PDF]

- SMASH: Sparse Matrix Atomic Scratchpad Hashing (MS Thesis, 2021)

- Student cluster competition 2018, team northeastern university: Reproducing performance of a multi-physics simulations of the Tsunamigenic 2004 Sumatra Megathrust earthquake on the AMD EPYC 7551 architecture (SC 2018)

- Speeding up DNNs using HPL based Fine-grained Tiling for Distributed Multi-GPU Training (BARC 2018)

- Video steganography using encrypted payload for satellite communication (Aerospace Conference 2017)

- Missing'Middle Scenarios' Uncovering Nuanced Conditions in Latin America's Housing Crisis (Cityscape 2017)

- Dynamic power allocation using Stackelberg game in a wireless sensor network (Aerospace Conference 2016)

- Automatic image annotation using a hybrid engine (Indicon 2015)

Posters

- JAXED

- Pi-Tiles

- The Prime Hexagon

What is KTB Wiki?

KTB Wiki, because the best way to store your knowledge is in an indexed SQL database.

This website was built on KTB Wiki. KTB wiki is my side project/attempt to consolidate knowledge gained during my Ph.D. journey. Though many other platforms provide similar service, the process of creating KTB Wiki was a learning experience since it taught me concepts of indexing, load balancing, and in-memory file systems. KTB Wiki was built using MediaWiki and is intended for research purposes only.

Interesting Reads